Getting comprehensive place listings from Google Maps

2021 Update: Google Maps API Nearby Places endpoint no longer has a free tier, so this method is probably prohibitively expensive now.

In 2018, I worked on a side project called Oinki, which would have been a vegan iPhone and android app, focused on finding the coolest vegan stuff in London. It was structured as a map app with vegan restaurants and stores.

In creating the app, one of the issues that we had was how we would create the initial list of places - was there some way to scrape place data from another service, to gather basic information like place names and phone numbers? I turned to the Google Maps API to see if I could get a list of places from them, but did not end up progressing into scraping any data from it.

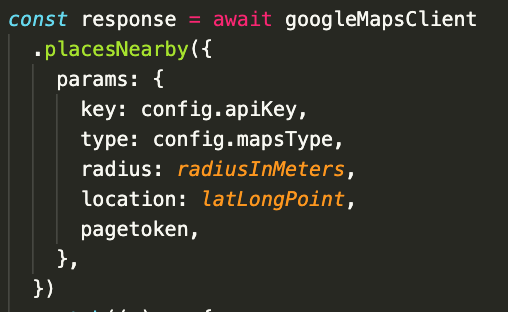

In March 2020, my sister asked me if I could get a list of restaurants for her for a COVID mutual aid effort she was working on in her area. I turned again to the Google Maps API to see if I could figure out how to get the data she needed. The part of the API that I decided to use was the Places Nearby endpoint, which returns a list of places surrounding a latitude / longitude coordinate, up to 60 places. An example call in Node.js might look like this:

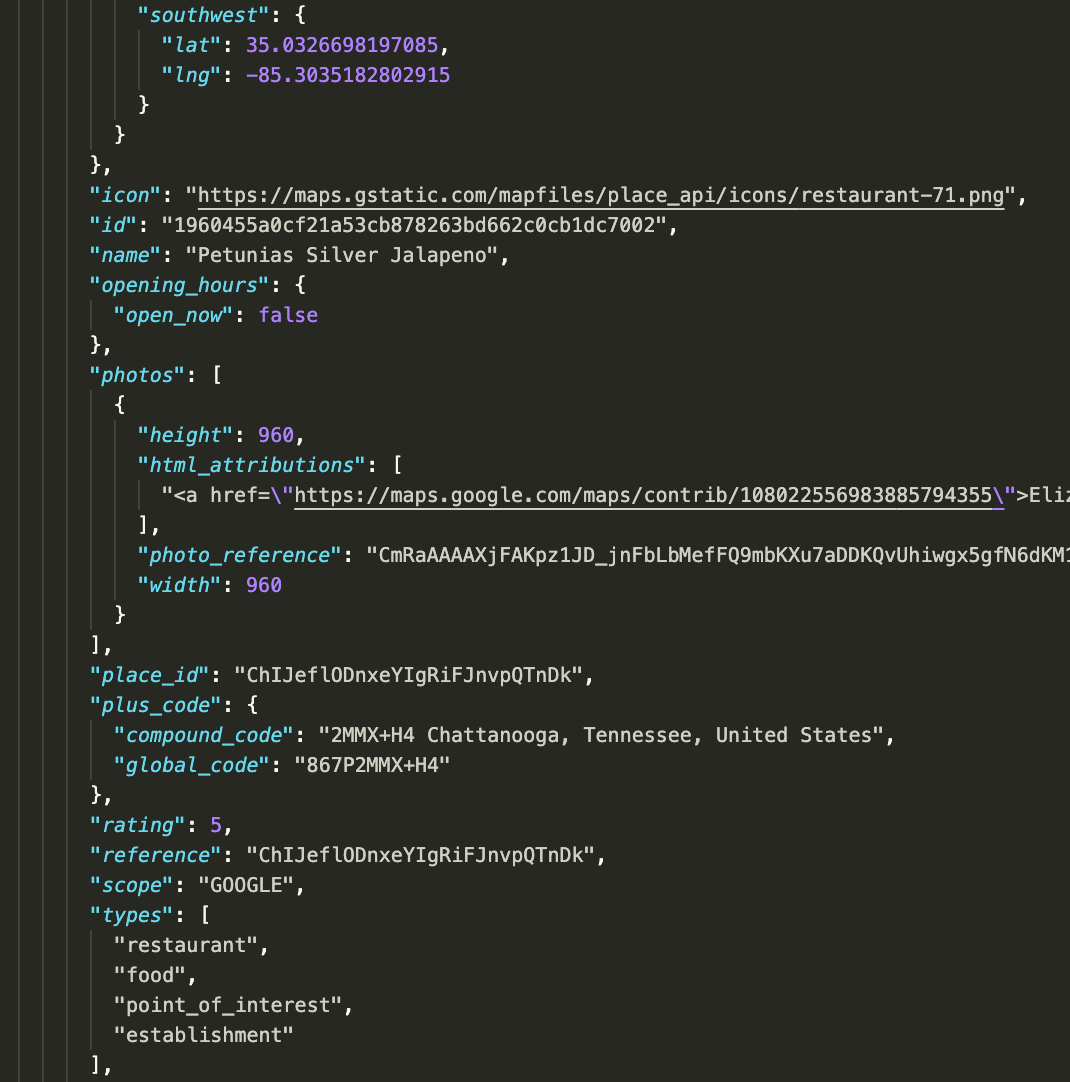

The API returns an array of JSON objects that correspond to places in your search area. These objects include things like ratings, phone numbers, opening hours and other information.

In my case, the API response didn’t give me all of the places I was hoping to get from the search - some places that I knew were in the target area were not included in the response. This was because of the 60 place limit that the API places on individual searches, which, since there were more than 60 places in my target area, would never return a full list of places. I needed to figure out how to actually get all of the places within a given target area.

To make the following discussion more clear, let’s define some terms.

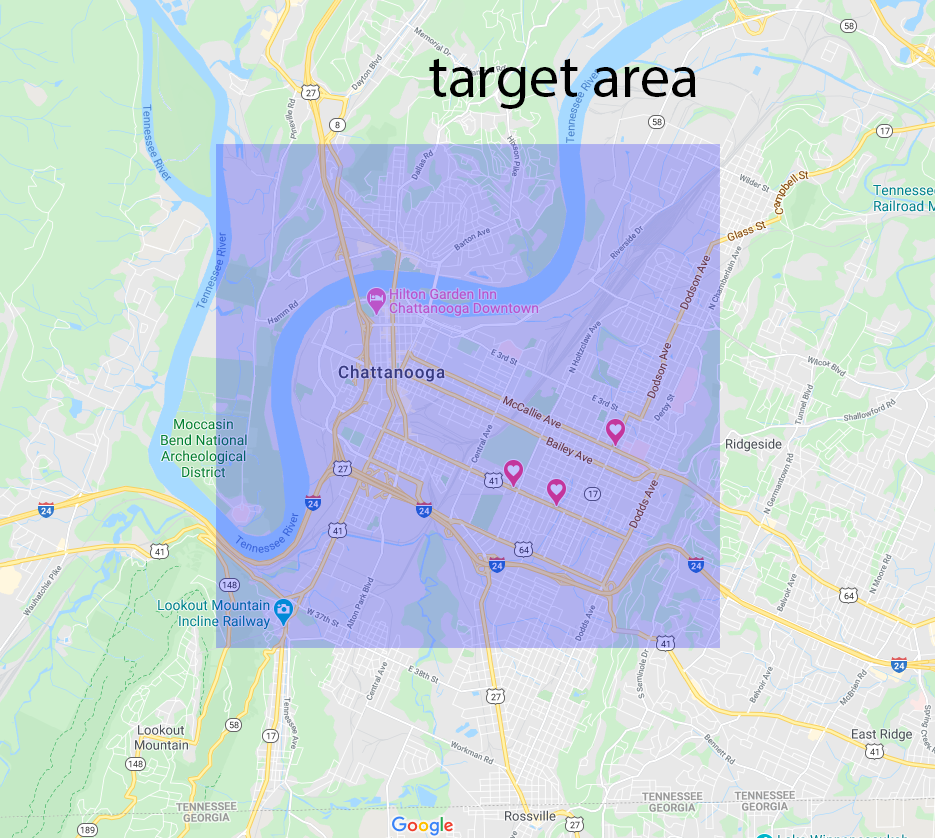

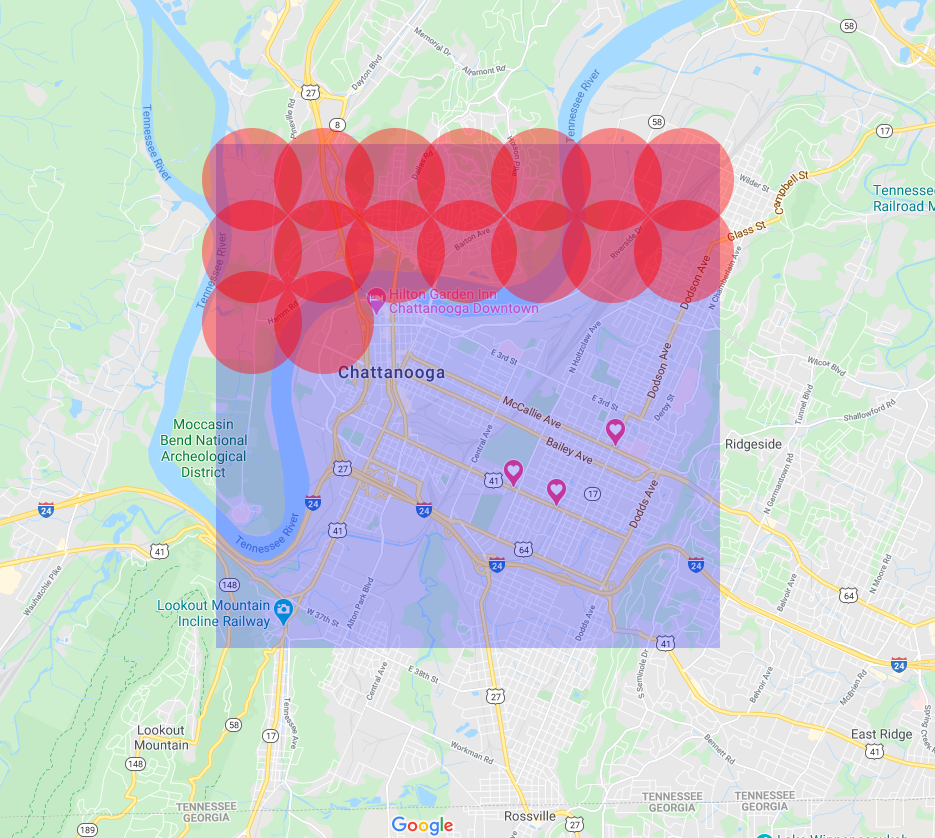

Target areas are the areas that we would like to search. In this example, downtown Chattanooga, Tennessee.

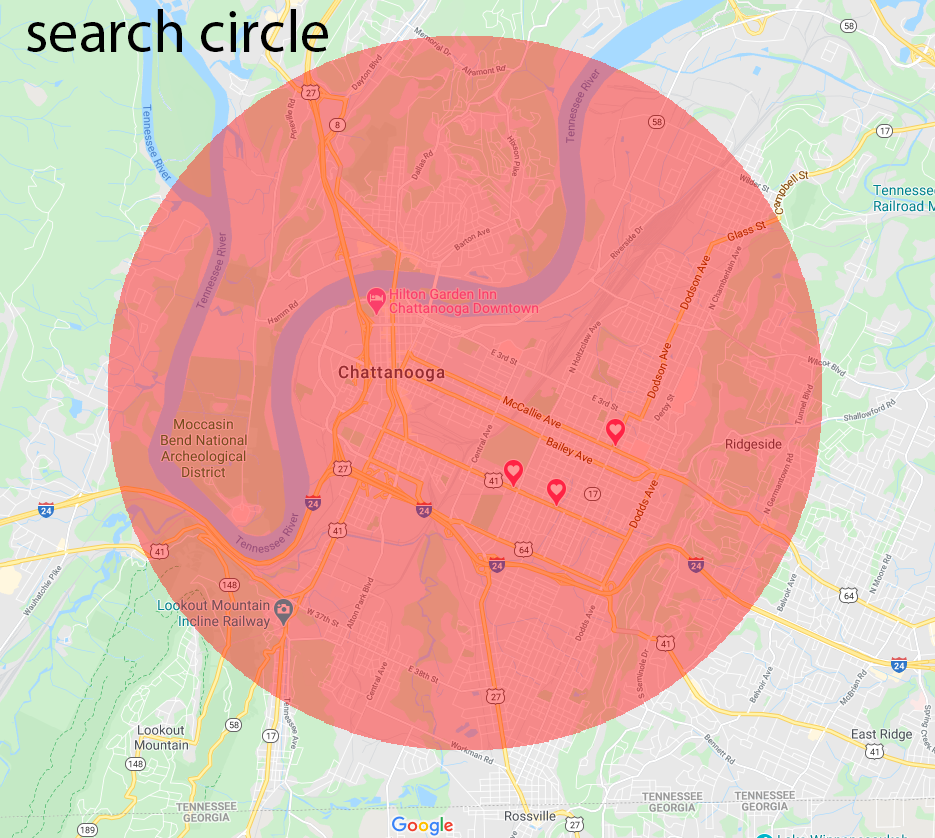

Google searches are circular, centered around an origin point. You supply the latitude and longitude of the origin point in the API call, and a radius for how far from the origin you want to search.

Google searches are circular, centered around an origin point. You supply the latitude and longitude of the origin point in the API call, and a radius for how far from the origin you want to search.

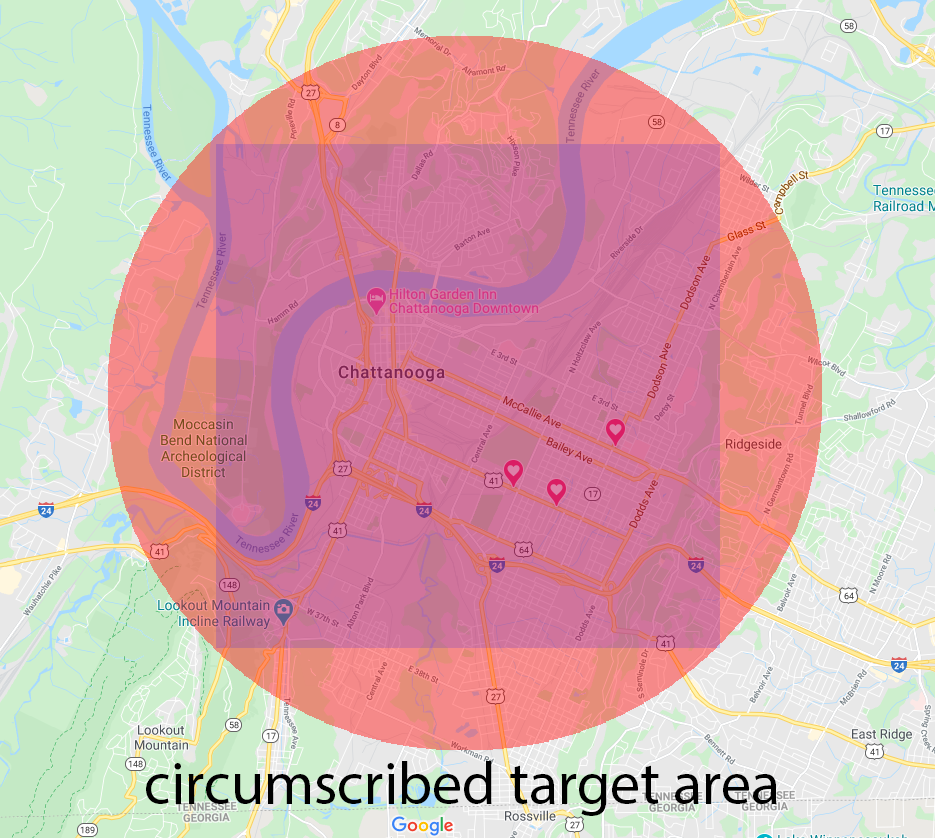

If we want to get back all of the places we are searching for in a target area, we can search circumscribe the target area with a search circle.

If we want to get back all of the places we are searching for in a target area, we can search circumscribe the target area with a search circle.

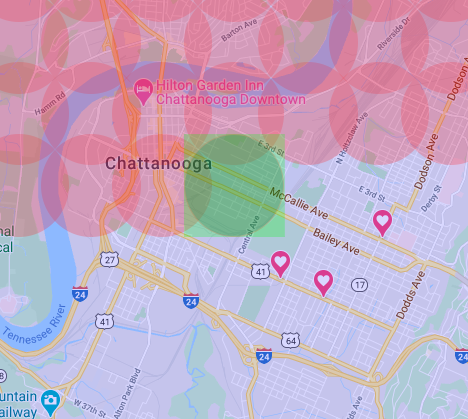

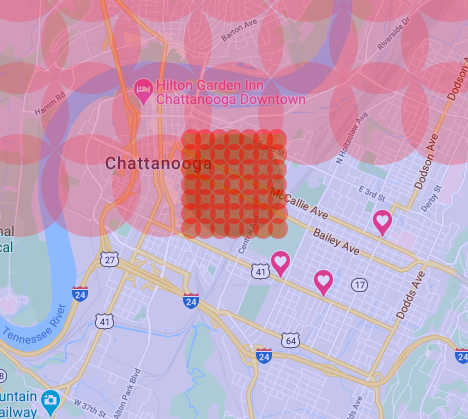

Let’s consider the ideal solution: a circumscribed target area. This would work if our call to the API returned all of the results for the area; the API, of course, only returns 60 places. Since the ideal solution was not possible, I decided to implement a method that people use to do searches in the real world: I divided up my target area into a grid of smaller target areas, and executed searches in each of the smaller grid target areas.

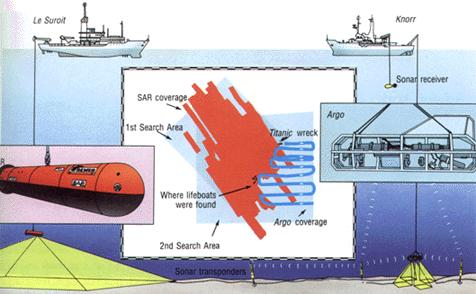

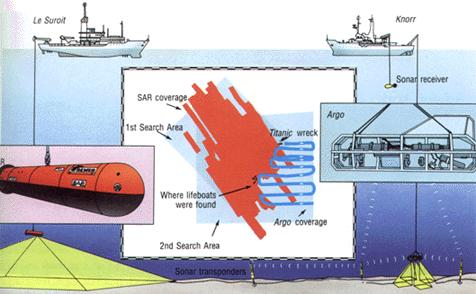

My inspiration for using a search grid came from my childhood obsession with the Titanic. The Titanic was a White Star Line passenger ship that sank in the North Atlantic in April, 1912. When Robert Ballard discovered the wreck of the Titanic in 1985, he used two different remotely operated vehicles to search his target area, SAR and Argo. The “mowing-the-lawn” pattern (sweeping back and forth across the target area) that Ballard employed gave me the idea to use essentially the same technique.

The search for the titanic and the remotely operated vehicles used.

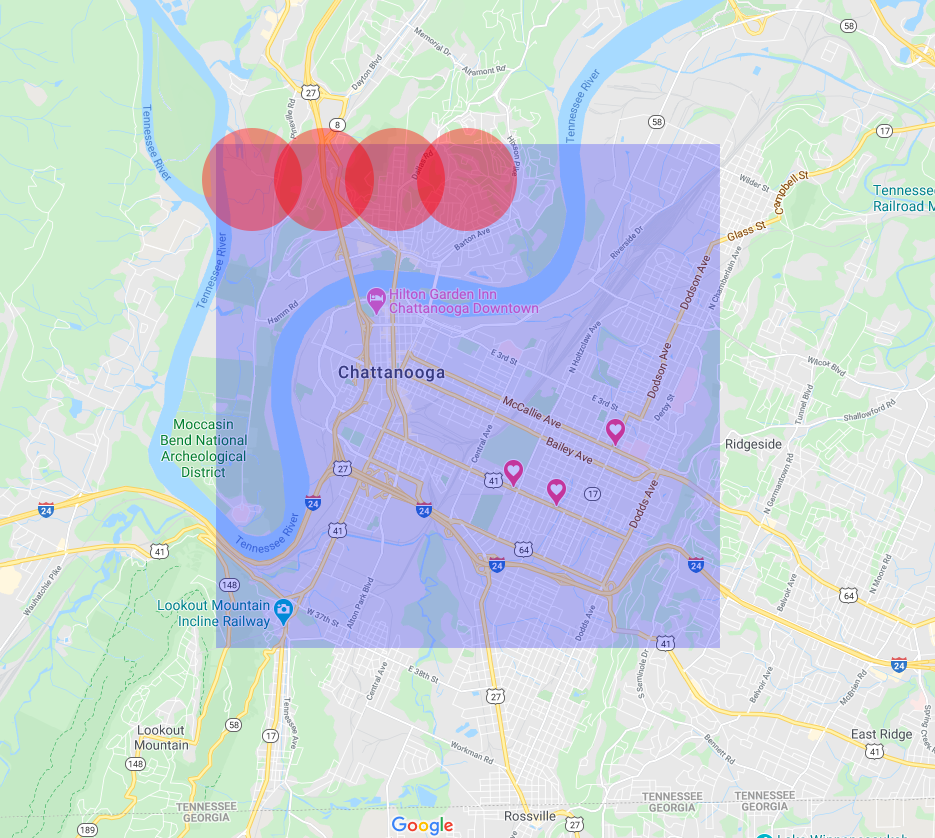

I wrote an algorithm to split the target area into a grid of coordinates around which I centered my searches. For each point on the grid, I executed a search. Once all the searches are executed, the searches cover the original search area.

Fantastic! That is a nice solution. But what if an API call still returns 60 results?

The solution to this issue is also simple, as it turns out. If we get back more than 60 results in one of the subdivided search areas, the algorithm can divide the subdivided area into it’s own grid, and execute smaller searches. We can then repeat this as necessary until all searches return less than 60 results.

With the algorithm, I was able get the API to return all of the places in my target area. I cleaned the data, converted it to a CSV, and sent it to my sister, who now has a list of all of the restaurants in her area.

This was a fun project for me. It was nice to approach a problem that was slightly outside of my normal constraints, although it was an artificial problem. If you want to check out the code that came out of the project, you can see it at the below link: http://github.com/samuelfullerthomas/argo

I named the project argo in homage to the video sled that discovered the Titanic, also name Argo.

Further Reading

I would be remiss to not mention that I did not come up with this method alone - it has been written about before, which I discovered in the course of writing this article. There are, I’m sure, many more, but these were two that I found immediately.

Ilia, a data scientist in London, used the same method: https://iliauk.wordpress.com/2015/12/18/data-mining-google-places-cafe-nero-example/

This stack overflow answer points to the same solution as well: https://stackoverflow.com/questions/13070477/how-can-i-get-more-than-60-results-from-the-google-places-api